Interview Insights

05.27.2021

How to hire great software engineers with interview rubrics

The Karat Team

Structured interview rubrics make it possible for software engineering teams to hire the right candidates for the job. How? By putting candidates on a level playing field and assessing them for competencies that matter. No matter which interviewer they meet with, the candidate and team can be assured that they will be evaluated based on an interview rubric that clarifies a set of competencies that are relevant to the job. Plus, the entire team can be sure that they are discussing the candidate using the same rating scale and shared language to describe the candidate’s performance.

Interviews are too varied for a single structured interview rubric to rule them all. However, we do have some tricks up our sleeves to make it easier. We’ve applied this approach to conducting nearly 100,000 technical interviews for companies like Databricks, MongoDB, Hippo Insurance, and Flatiron Health.

In this guide, we’re going over a three-step process to create interview rubrics with examples to get you started.

But first…

What is an interview rubric?

Interview rubrics refer to a method or scorecard technical hiring teams use to ensure they’re asking similar questions during interviews. This ensures an inclusive and fair recruiting and hiring process in which every candidate gets an equal chance of success because they’re compared using the same criteria.

The criteria are separated by competency and are meant to assess a person’s skills and potential to gain new skills. Many technical hiring teams change these rating scales to clearly describe what “good” looks like for them. Interview rubrics can be adapted to analyze a candidate’s technical abilities or soft skills and spot whether the potential hire has the right past experience.

So, how are these interview rating scales used?

Interview rubrics are important for clarifying which competencies are relevant and guiding the interview so that the hiring manager asks the candidate to demonstrate them. For this reason, every interview rubric is unique to the job’s role and responsibilities — but should be applied to every interview for that role.

It’s best to start using interview rubrics early during the recruiting process for one role. This is because they help you offer the same interview experience to all candidates and ensure you’re filtering competencies for everyone. On top of this, they help reduce bias and assess a candidate based on objective scoring criteria.

While each interview rubric does depend on your company and roles, here’s a general process to get you started with building your next rubric for hiring software engineers:

How to create an interview rubric

- Identify competencies are relevant to the job

- List observable candidate actions and results

- Summarize a completed interview rubric into a hiring recommendation

Identify competencies that are relevant to the job

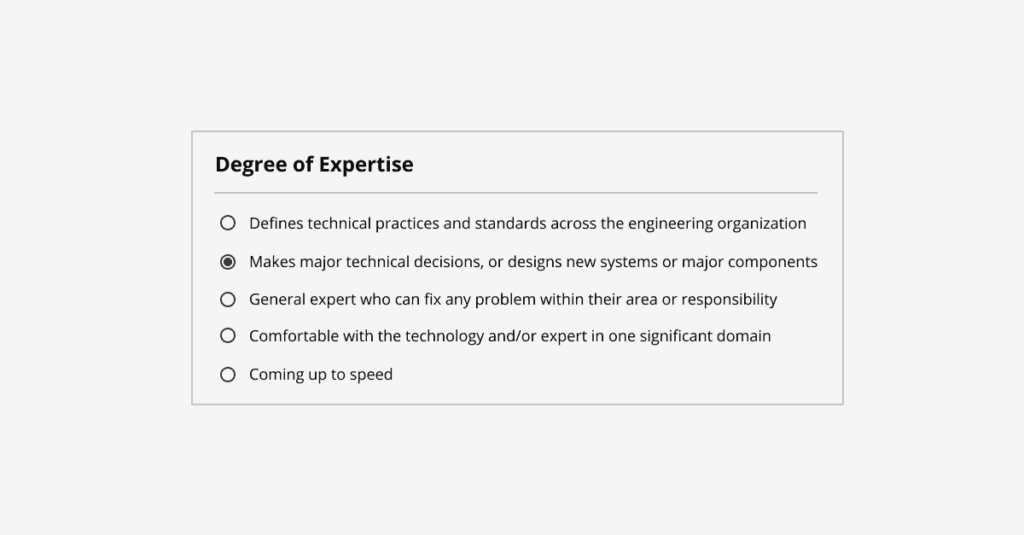

“Degree of expertise” might be an important topic in a senior level behavioral interview, while a programming interview might assess “Technical Communication” and “Implementation quality.” Remember, if a competency is on the interview rubric it’s signal. If it’s not, then it’s noise.

At Karat, we use over 75 competencies in a variety of technical interviews. So, there isn’t really a limit on the number of competencies you can select, but be sure they are good ones that produce signal.

So what makes a good competency for a technical interview?

- Relevant to the role

- Does not bias for things that are irrelevant to the role like US cultural awareness or knowledge that can be learned quickly on the job

- Clearly demonstrable in the live interview

Technical assessments ought to be written with the relevant competencies in mind. A great interview gives candidates explicit requests to demonstrate relevant skills in a psychologically safe space. Remember, interviews should never be a mind-reading exercise.

List observable candidate actions and results

Interview rubrics can save a ton of time and hassle for software engineers conducting interviews. All they have to do is check the box that describes the action the candidate took during the technical assessment. This is way easier, more consistent, and less biased than creating a write-up from scratch after every interview.

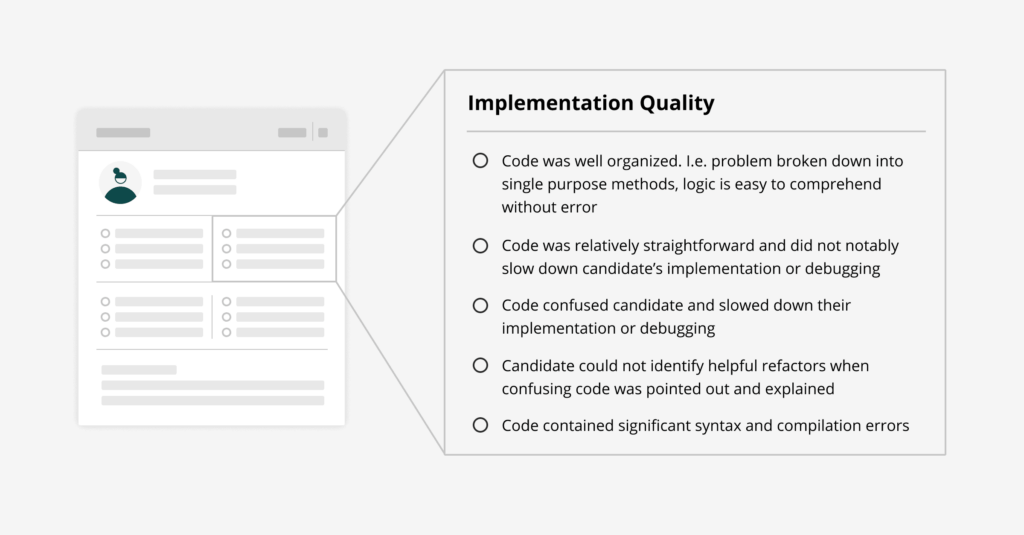

Start by translating competencies into a scale of observable behaviors. In the example below, the competency “implementation quality” is turned into a list of radio buttons, each with a description of the action taken by the software engineer.

If the candidate gets confused about their own code, then the interviewer can conclude that the code is confusing. On the other hand, if the code doesn’t confuse its author, but also there are no specific code-organizing techniques used, then the interviewer can conclude that the implementation quality is fine but not great.

This is great for coding interviews. What about interviews that explore a candidate’s expertise in another area? You can ask informational questions to explore how broadly or deeply a candidate knows a specific coding language or how they approach code review. This can also be applied to behavioral interviews for senior candidates.

Summarize a completed interview rubric into a hiring recommendation

Interview rubrics guide a team clearly to a hiring recommendation. Successful software engineering teams coach their interviewers on:

- The contents of each interview rubric, the interview questions, and how they map to each other

- How to use the interview rubric objectively, without inserting personal preferences. If it’s not on the interview rubric, it’s noise!

- Focusing on conversation and candidate experience. Once interviewers are equipped with an interview rubric and interview questions, they no longer have to think about those things, and they can focus on having a great interview

What about making a hiring recommendation? Different algorithms can be created to lead to a quantifiable result. For example:

- Weight competencies so interviewers know how much that competency impacts or doesn’t impact their yes/no decision

- Pass a certain bar or avoid red flags on each competency. Assign points to each behavior, and score the rubric like a test

- Consider multiple “algorithms for job success” so that great candidates stay in the funnel

Interview rubrics are key to inclusive hiring

Creating diverse software engineering teams doesn’t have to be challenging. The talent pipeline exists. Best practices to improve inclusivity in the technical recruiting process need to be applied consistently. An interview rubric is one of the best tools in your arsenal.

By describing what is meaningful to the technical assessment, interviewers are explicitly guided towards signal. If you notice interviewers coming to debriefs with negative opinions based on irrelevant, noisy indicators, you’ll want to name them. Some of these include:

- Presence of absence of upspeak. This is the raising of one’s intonation at the end of sentences; it’s common in American and Australian English speakers, especially young people and women. It bears no relationship to competence or confidence.

- Shyness. If specific leadership skills are relevant and important, they will be explicitly noted on the interview rubric.

- “Um.” Filler words and a small amount of rambling is likely the result of nervousness and stress.

- Presence or absence of clarifying questions. When specific guidance hasn’t been given to the candidate, they may ask for more information. Likewise, they may not know it’s okay to ask. This is often true for women and people of color.

For a complete look at how a structured scoring rubric can look like, discover some of our interview rubric samples you can use during technical interviews.

Related Content

AI Hiring

03.05.2026

AI has raised the stakes on every engineering hire. Most evaluation processes weren’t built for that. Here’s how the best engineering organizations are closing the gap. For most of the last decade, growing engineering capacity meant growing headcount. You hired aggressively, accepted some variance in quality, and assumed that volume and velocity would carry teams […]

Interview Insights

02.18.2026

Same-day technical interviews are transforming how software teams compete for top engineering talent. By enabling candidates to schedule and complete interviews within hours of receiving an invite, companies dramatically improve time-to-hire, offer acceptance rates, and candidate experience. Learn how Karat's global network of trained Interview Engineers delivers scalable, 24/7 interview coverage—and why this operational edge is critical in the race for AI and software engineering talent.

AI Hiring

01.21.2026

AI is changing technical hiring by accelerating every stage of the funnel and making it harder to identify genuine software engineering skills from AI-assisted output. Candidates can now easily produce polished resumes and correct interview answers, even if they lack the necessary skills or knowledge. This degrades hiring signals and is forcing companies to rethink […]