AI Hiring

01.14.2026

The Growing Skills Gap in the AI Era – And How NextGen Identifies True Engineering Strength

The Karat Team

Conventional wisdom about AI’s impact on employees is that it acts as an equalizer. It’s expected to offset employees’ or skill gaps, but our data shows that isn’t the case for software engineers. AI is actually a multiplier. Strong engineers who can leverage AI are producing outsized value, which means the gap between them and less skilled engineers is widening, not shrinking.

Our recent survey of more than 300 SVPs and CTOs found that 73% say strong engineers are now worth at least 3x their total compensation, while 59% say weak engineers deliver net zero or negative value in the AI era.

AI also introduces a new set of skills that software engineers need to have.

- Before AI: Engineering performance was essentially measured on a two-dimensional matrix: technical ability + communication/soft skills.

- In the AI era: Today, a strong engineer requires skills across three dimensions: technical ability + communication/soft skills + AI proficiency.

This shift in engineering skills doesn’t just impact individual engineers. It also affects team and company performance.

- High-performing teams have a small or only a few AI-enabled engineering skills gap. Their engineers are consistently strong across all three dimensions. As a result, they can move faster, are more productive, produce high-quality code at scale, and can modernize their workflows.

- Weaker teams with large skills gaps will struggle to effectively collaborate, produce uneven output, and deliver work more slowly. Stronger engineers will need to spend more time reviewing the work of weaker engineers, managers may need to provide more oversight, and AI adoption will vary.

Over the next three years, there will be significant differences in productivity. Our data shows more organizations that use human and AI interviews expect coding errors to decrease, the time it takes to bring new products or features to market to decrease, and the number of products and features they release to increase compared to those who only use either human or non-human assessments.

In response to AI, companies are adapting their workforce strategies. Sixty-nine percent of surveyed employers say that they plan on hiring new people with skills to design AI tools and enhancements. Slightly fewer (62%) say they’ll hire new people with skills to better work alongside AI.

Hiring is an effective way to build high-performing teams. However, some companies still rely on hiring processes that haven’t been updated to reflect relevant skills in the AI era and the challenges of interviewing when AI tools are easily accessible.

This results in false positives that prevent companies from seeing a candidate’s AI-enabled engineering skills gap until after they’re hired, or false negatives that cause companies to pass on highly skilled candidates. Both are costly mistakes to make.

To help your organization avoid this, read on to learn:

- What a strong engineer in the AI era looks like.

- Why interviews currently can’t detect these skill gaps.

- How Karat NextGen reveals these differences immediately.

How AI Changed Engineering Work (and Hiring) Faster Than Teams Could Adapt

AI use is the standard now, as the 2025 State of AI-assisted Software Development reported that 90% of survey respondents use AI — a 14.1% increase from last year.

Although large language models (LLMs) have made it easy for anyone to produce code quickly, they still lack sound, reliable judgement. This is reflected in the World Economic Forum’s The Future of Jobs Report 2025. Although AI and big data is the fastest-growing skill, technological literacy comes in third. Over 40% of employers already consider both of these skills to be core skills, and they’re projected to become even more critical by 2030.

Successful engineering now depends less on coding and more on the following meta-skills:

- AI literacy: Prompt effectively, understand the capabilities and limitations of AI tools, and recognize when AI output needs validation or refinement.

- Reasoning: Critically think about problems, evaluate AI suggestions, analyze tradeoffs to choose the best path forward, and make sound decisions when faced with ambiguity or incomplete information.

- Debugging: Identify, investigate, and fix issues in AI-generated code.

- Architecture sense: Understand how components interact across systems, design robust and scalable solutions that integrate AI, and maintain a holistic view of how individual code changes affect the larger codebase.

The New AI-Era Engineering Spectrum: What Differentiates Top Performers

Engineers who excel in the AI era tend to:

- Use AI to enhance problem-solving rather than replace their thinking. They treat AI as an assistant, using it to accelerate their work by generating ideas, exploring alternatives, and speeding up iteration. They combine their own judgement with AI-generated suggestions so that they maintain ownership of the solution and final decision-making.

- Validate, refine, and debug LLM output. Thirty percent of surveyed respondents currently have little to no trust in the code generated by LLM. Strong engineers know that AI output may include errors or hallucinations, so they carefully review AI-generated code, test thoroughly, and debug issues before solutions are implemented. This ensures the final product is high-quality and correct.

- Navigate ambiguity and large codebases comfortably. They can work in complex, real-world environments where requirements are often unclear and codebases span multiple files and teams. To overcome these challenges, they ask questions, identify key dependencies, and make informed tradeoffs.

- Communicate clearly and justify decisions. High performers can explain tradeoffs, assumptions, risks, and their reasoning to stakeholders and teammates. This is particularly important when enterprises have large or distributed teams, as clear communication makes collaboration and scaling easier.

- Show deep reasoning under pressure. When faced with difficult challenges or tight deadlines, these engineers are still able to demonstrate rigorous, analytical thinking. Complex reasoning remains a problem for AI, making it an important skill for engineers to retain.

In contrast, engineers who are still developing AI-era skills often:

- Paste AI output without understanding it. When engineers treat AI-generated code as ready to ship, this increases the chances of bugs and security risks.

- Struggle without deterministic prompts. While they may succeed when armed with specific, tightly defined prompts that they can give to AI, they find it more difficult to get their desired outputs when they have to design their own prompts.

- Fail to identify LLM hallucinations. They struggle to recognize when AI outputs are wrong. This is extremely risky when some models hallucinate as much as 79% of the time. Without the ability to recognize when this happens, these engineers are more likely to let mistakes slip into production code.

- Cannot explain their own code. Since they don’t understand the code that AI generated, they struggle to explain how it works and justify design choices.

- Freeze when AI-generated solutions break. Without understanding the underlying logic, they’re unable to debug or adjust the solution when it doesn’t work as expected.

To identify engineers who have the skills that are crucial for success, create structured interview rubrics. They ensure that your interviews evaluate relevant competencies and candidates are consistently measured across interviewers, ultimately helping you reach a hiring decision that’s fair and predictive.

Why Traditional Technical Interviews Miss the AI Skills Gap

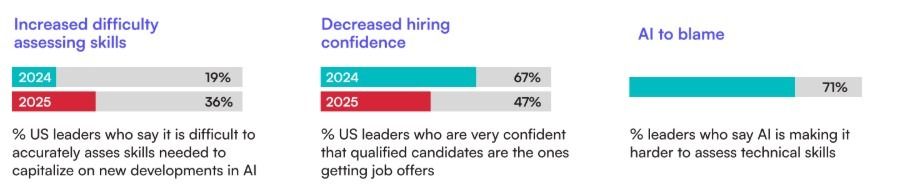

According to our data, AI has decreased hiring confidence in the U.S. There are several reasons why U.S. tech leaders feel it’s more difficult to accurately assess candidates, and they all stem from traditional technical interviews.

AI Makes Coding-Based Interviews Unreliable

Traditional technical interviews often lean heavily on take-home coding tests that focus on whether the candidate provided the correct answer. Candidates can use AI assistants to easily generate working code, making it impossible to distinguish between those who truly understand how to problem solve and code and those who are simply relying on AI.

Surface-Level Correctness = False Signal

Traditional interviews reward the final answer, instead of the reasoning behind it. With AI assistance, it’s easy for candidates to produce the right solution without demonstrating other skills that have become more important in the AI era, such as problem solving, communication, and systems design. Interviewers get an inaccurate assessment of the candidate’s ability, since it’s based on correctness, which can mask a lack of deeper understanding or critical thinking.

Companies Get False Positives and False Negatives

False positives are candidates who are heavily dependent on AI. They are a real concern for companies, as 8% of surveyed professionals say they rely “a great deal” on AI at work, 20% say “a lot,” and 37% say “a moderate amount.” False positives can appear highly capable in traditional interviews, but often struggle to perform when they need to do more than just copy AI outputs.

False negatives are candidates who don’t optimize for traditional interview tricks such as puzzle-style coding tests or memorizing patterns. Although they have strong reasoning skills and experience, they may be screened out before they can show their strengths.

These issues lead companies to believe they’re hiring strong engineers until those hires struggle to perform in the complex, ambiguous environment of real-world engineering.

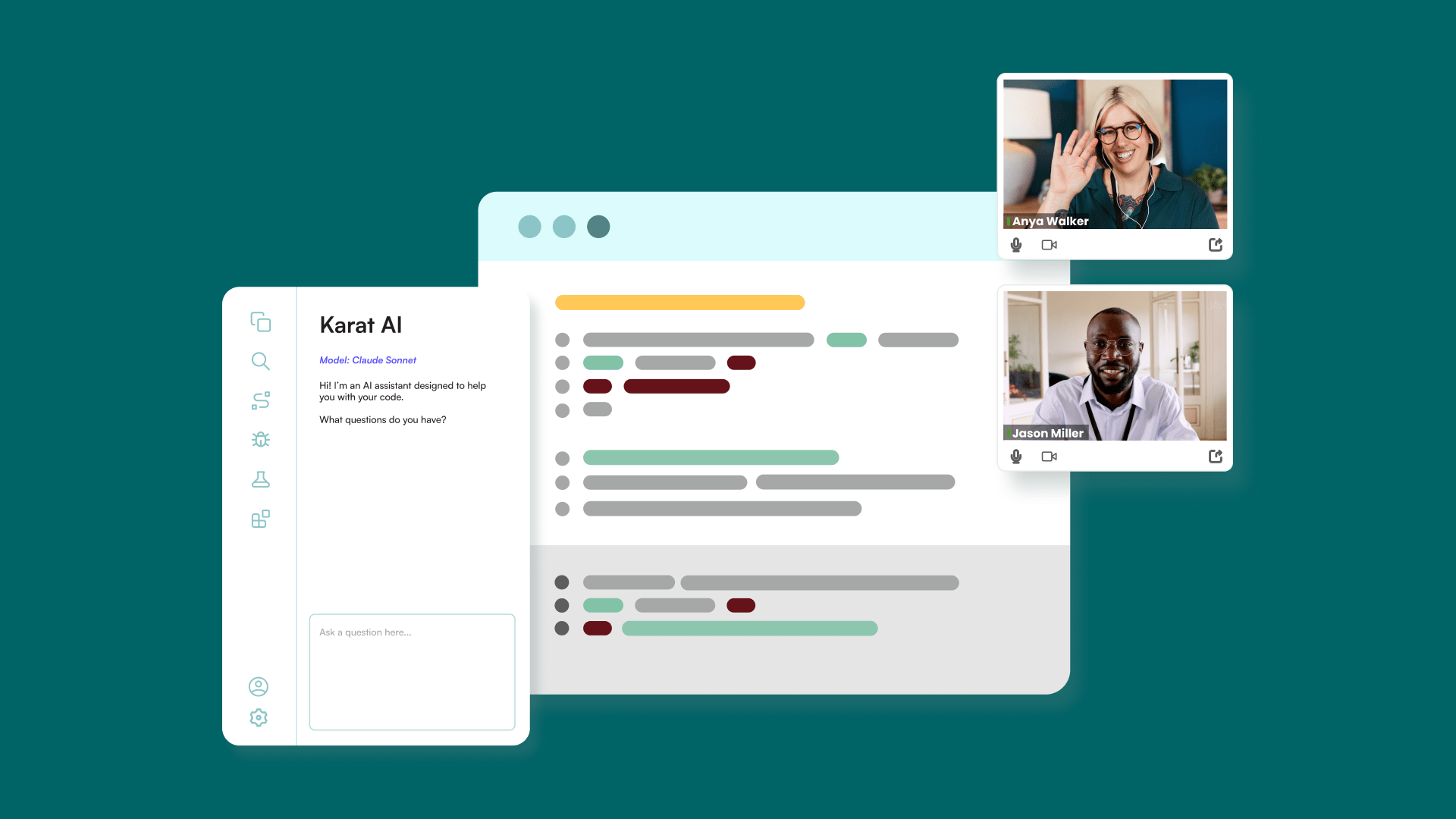

How NextGen Reveals True AI-Era Engineering Strength

Karat NextGen solves the issues that come with using traditional technical interviews in the AI era. As the first human-led, AI-enabled talent evaluation solution, NextGen can cut your time-to-hire by up to 50% and save 40 to 50 engineering hours per hire.

Real-World Environment Reveals Real Capability

NextGen places candidates in a realistic development environment with:

- A multi-file codebase that mirrors the messy, interconnected systems that engineers work with. This exposes how well they navigate existing code, understand dependencies, and make changes without breaking surrounding functionality.

- Complex system-level problems that require reasoning across multiple components, evaluating tradeoffs, and breaking down problems.

- An integrated AI assistant. Every candidate has access to the same AI tools, which turns AI into a controlled variable and lets interviewers see how effectively candidates can work with it.

Human and Evolving Content Make a Difference

There are two components of NextGen that make it more effective in identifying engineers who have the skills needed to succeed in the era of AI.

Human Interviewers

AI has leveled the playing field when it comes to the answers that candidates produce, so interviewers have to focus on how they got there instead. This is why having a human interviewer is critical. Human-led interviews uncover authentic reasoning, as a human can observe and dig into how candidates reach their solution.

Karat Interview Engineers (IVEs) are experienced software engineers who are trained to:

- Recognize shallow patterns that indicate surface-level understanding or memorization.

- Ask follow-up questions that reveal a candidate’s depth of understanding and reasoning.

- Identify candidates who are dependent on AI, as weak logic usually collapses under scrutiny.

Ever-Evolving Interview Content

The AI landscape is constantly shifting. This means that the skills that are most relevant today may change months or years from now.

Instead of training interview content as something that’s developed once and used for years, NextGen is built to evolve with AI. It keeps talent evaluation processes in line with the latest trends and technologies so that you’re always evaluating candidates against the current AI landscape.

Rubrics Built for the AI Era

NextGen uses a scoring rubric that’s specifically designed to measure the skills that matter in an AI-enabled world:

- AI literacy: How well candidates understand AI’s capabilities and limitations, and how effectively they use AI.

- Codebase navigation: Whether candidates can move through unfamiliar, multi-file repositories, identify relevant components, understand dependencies, and anticipate the impact of their changes across the codebase.

- Problem decomposition: The candidate’s ability to break large, complex, or ambiguous problems into clear, incremental steps that they can take.

- Communication: How clearly candidates can explain their thinking and collaborate with the interviewer.

- Architecture and product reasoning: The candidate thinks beyond whether the code will work. They also consider scalability, constraints, edge cases, and user impact.

Karat IVEs use these rubrics to turn observable behaviors into objective data that generates an accurate hiring signal.

Why Human-Led Interviews Matter More in the AI Era

The use of AI in hiring doesn’t diminish the importance of human interviewers. In fact, it increases. Here’s why:

- AI can’t reliably evaluate AI-assisted solutions. While an AI evaluator can assess syntactic correctness, it struggles to consistently determine whether a candidate understands the solution, effectively used an AI assistant, or caught and corrected hallucinations. Evaluating AI-assisted work requires interpreting intention, judgment, and depth of understanding, which are still uniquely human competencies.

- Candidate experience matters. The hiring process shapes a candidate’s impression of your company. A purely automated or AI-driven experience can feel impersonal or create friction, which impacts your offer acceptance rate and employer brand. Humans build rapport, provide empathy, and ensure the hiring process reflects the company’s values. These qualities create positive candidate experiences, and they can’t be replicated by AI.

- Interview quality needs human judgment. Engineering interviews require dynamic, adaptive conversation. Humans are uniquely able to detect nuance in communication, ask follow-up questions, dig deeper when an answer feels shallow, identify genuine understanding, and recognize when a candidate takes a different but valid approach. This results in interviews that are fair, relevant, and aligned with core competencies.

- Human interviewers avoid safety, fairness, and employer branding issues. Relying solely on AI to evaluate candidates introduces several risks. AI can perpetuate biases. Even advanced models that are designed to curb explicit biases “continue to exhibit implicit ones.” AI can also create negative candidate experiences by misinterpreting explanations or penalizing more creative reasoning or approaches.

The Complete Loop: How NextGen Works With Karat Core

With NextGen, Karat now offers two solutions that work together to offer a complete assessment of software engineering candidates in the context of today’s AI-enabled world.

- Core evaluates traditional fundamentals such as algorithmic thinking, coding proficiency, and engineering fundamentals.

- NextGen evaluates AI-era performance. This includes how candidates direct, refine, and evaluate AI-generated code.

Together, NextGen and Core provide a comprehensive model of engineering excellence. This approach ensures companies identify candidates who not only have a solid engineering foundation, but who can also succeed as AI continues to shape software development.

What AI-Era Engineering Skills Will Matter Next

As AI technology accelerates, the skills that define strong engineering talent will continue to shift, and hiring processes need to keep up. There are already four skills that are becoming more important.

- Agentic AI: Many organizations are either testing or implementing AI agents. McKinsey found that 23% of respondents said their organizations were scaling an agentic AI system, and 39% said they’ve begun experimenting with AI agents. As this increases, engineers will work with AI agents that can plan, make decisions, and execute multi-step tasks without human involvement. They’ll need to know how to design, configure, and supervise AI agents to carry out engineering and business goals.

- AI orchestration: Instead of prompting one AI model at a time, engineers will orchestrate multiple AI systems that work together. This involves coordinating workflows, managing dependencies, and maintaining these components.

- End-to-end development: Engineering work is moving from isolated coding tasks toward end-to-end workflows that involve product thinking, system design, and the ability to collaborate with AI. We’re seeing the rise of multi-step “build an app” assessments that will require candidates to scope a feature, design the architecture, use AI to generate code, identify and debug AI outputs, and deliver a working prototype.

- Continuous evolution: As AI models improve and new technologies emerge, the skills that matter the most will keep evolving. Engineers need to continuously learn and adapt in order to keep their skills strong and relevant. For employers, this means updating interview content and rubrics so that hiring criteria stays aligned with the evolving AI landscape.

Karat’s ongoing research and product evolution ensures customers stay ahead of these shifts. Rather than building an AI-enabled interview and letting it stagnate, our team stays on top of the latest innovations and trends and updates our tooling and interview content accordingly.

How to Reduce AI-Era Hiring Risk and Avoid Mis-Hires

As our data shows, AI isn’t leveling the playing field — it’s widening it for individuals, teams, and organizations.

Google’s DORA team has also found that individuals with higher AI adoption see higher levels of individual effectiveness, higher levels of code quality, higher levels of product performance, and higher levels of software delivery throughput.

When organizations build teams of AI-proficient engineers, they tend to see increased velocity and better code quality, as well as build an engineering culture that’s aligned with AI. These results help them stay ahead of competitors.

While the benefits of hiring AI-ready engineers are clear, companies need visibility into candidates’ true engineering skills in order to make the right hiring decisions.

Most interview processes were built for a pre-AI world, so they’re prone to false positives and false negatives. These assessments also don’t have the mechanisms to evaluate the AI skills that are now necessary for software engineering.

Karat NextGen makes this invisible gap visible. It provides the visibility that talent and engineering leaders need to build teams that thrive in an AI world. Through a real-world dev environment, experienced interviewers, interview content that reflects complex, real scenarios, and updated rubrics, NextGen generates an accurate hiring signal that enables you to make confident hiring decisions.

Book a walkthrough of NextGen to see how you can implement it for your organization.

FAQs

AI Engineering Skills Gap

AI acts as a multiplier, not an equalizer. Strong engineers use AI to accelerate prototyping, validate outputs, and make better decisions. Engineers lacking strong fundamentals may rely on AI without understanding the outputs leading to an increase in coding errors. As a result, top performers are delivering outsized value, while the ROI of lower performing engineers diminishes.

Strong engineers combine technical ability, communication skills, and AI proficiency. This includes AI literacy, critical reasoning, debugging AI-generated code, navigating large codebases, architectural thinking, and clearly explaining tradeoffs and decisions.

Traditional code tests evaluate outputs. These methods attempt to abstract hiring signals about a candidate’s reasoning or problem-solving from a working solution rather than evaluating these skills independently. With AI tools, candidates can generate working code without understanding it, making it more difficult to reverse engineer a signal about candidates’ judgment, problem-solving depth, and real-world engineering ability without live interactions.

False positives are candidates who perform well in interviews using AI but struggle on the job due to a shallow understanding. False negatives are strong engineers who are screened out because they don’t optimize for puzzle-style or AI-assisted interview tactics. Both lead to costly hiring mistakes.

Companies need interviews that evaluate how candidates use AI. Strong engineers validate, refine, and debug AI output and can explain their reasoning, while weaker candidates rely on AI blindly and struggle when asked follow-up questions.

NextGen places candidates in real-world, AI-enabled environments with a multi-file codebase and human-led probing. This setup makes AI usage visible and allows interviewers to evaluate reasoning, debugging ability, communication, and architectural thinking using rubrics designed for the AI era.

Now. Candidate behavior has already changed, and AI adoption continues to accelerate. Organizations that delay updating interview rubrics and formats risk higher mis-hire rates, slower teams, and declining engineering productivity over time.

Related Content

AI Hiring

04.28.2026

What building and running the first human-led, AI-enabled technical interview system actually looks like When we launched Karat NextGen late last year, we had a clear thesis: the way companies evaluate engineering talent hadn’t kept pace with how software is actually built. AI tools had changed the job, but interviews were still designed for a […]

AI Hiring

03.11.2026

A Practical Guide for Training Interviewers in the Human + AI Era AI use in technical interviews can be detected by observing candidate behavior, coding patterns, and reasoning during live interviews. Common signals include continuous screen switching, instant fully formed solutions, inconsistent explanations, and pasted code blocks.The most reliable approach is training interviewers to evaluate […]

AI Hiring

03.05.2026

AI has raised the stakes on every engineering hire. Most evaluation processes weren’t built for that. Here’s how the best engineering organizations are closing the gap. For most of the last decade, growing engineering capacity meant growing headcount. You hired aggressively, accepted some variance in quality, and assumed that volume and velocity would carry teams […]